This tutorial is an introduction to .NET Core CLI tools. More precisely it is about creating a web API using the CLI tools provided for .NET Core. Whether you are a beginner in development or just new to .NET Core this tutorial is for you. However, you need to be familiar with what an API is and unit tests to fully enjoy this tutorial. Today, we will set up a solution grouping an API project and a test project.

Tag: webservices

My Musketeers for DotNet Test driven development

Posted in Building future-proof software, and Productivity

Test, four letters, one meaning and for some people a struggle. Getting people around you to write tests is easy only when everyone already agrees with you. As often, there are instances where some people show resistance to writing tests. Here

D: I don’t have time to write tests.

A: I don’t need to test this.

B: I can’t write a test for this.

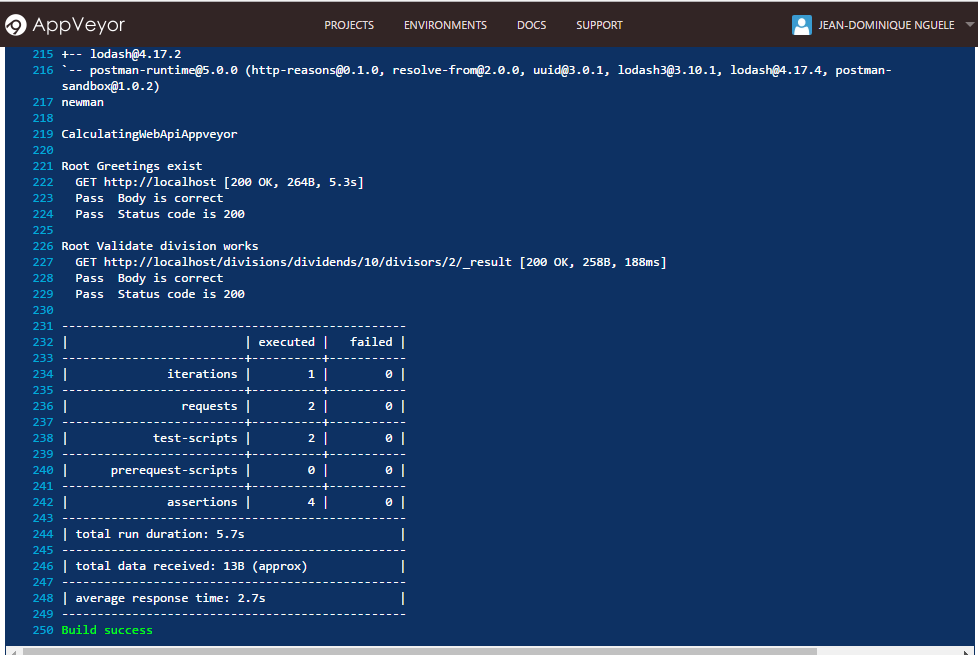

Simple continuous integration with Appveyor and Newman

Posted in Building future-proof software, Productivity, and Tutorials

Last month, I posted about Postman enabling you to test your APIs with little effort so that you can build future-proof software. Here we are going to cover setting up continuous integration for a simple project by using Newman to run your Postman collections. You may have heard about continuous integration in the past. Most commonly, continuous integration will build software from one’s changes before or after merging them

Postman collections: Making API testing great again!

Posted in Building future-proof software, Development, and Tutorials

Turning shaky code into future-proof software

Over the past years

2019 update, there now is a guide to install the AWS CLI tools on Mac. It’s probably been there for a while but still after…