Nothing can be said to be sure in this world except that death, taxes and Day 7 will sting. Today’s puzzle took much more time…

Tag: coding

Hello everyone, it’s been a year already since last taking on this challenge. Rejoice! The new Advent of Code season has begun. Time for your…

Hey everyone. Some of you might have forgotten, just like I did that there is much to rejoice for this year. The World Cup 2022…

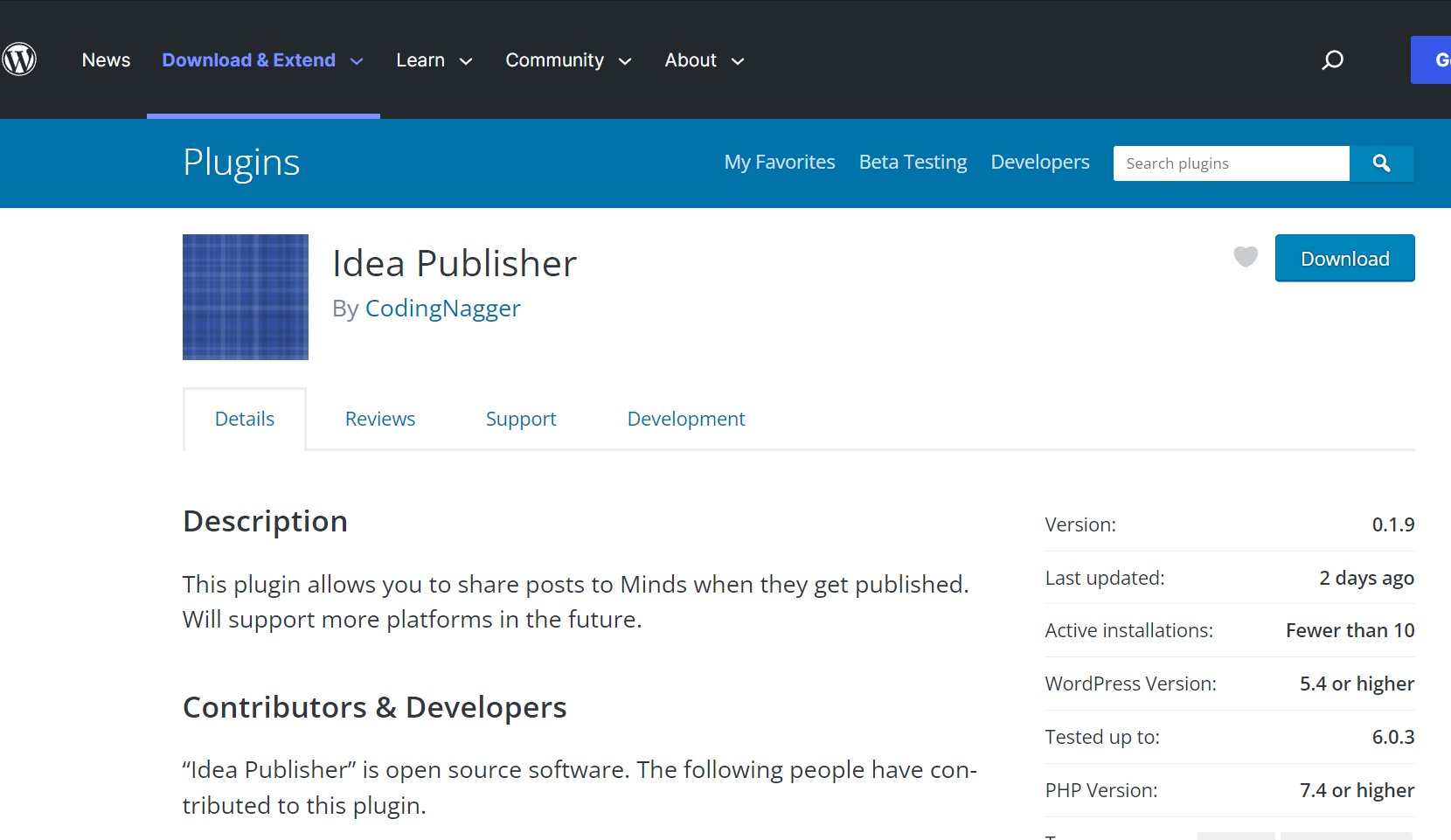

Fixing the Idea Publisher plugin is not something I thought I would need to do a day after announcing it and yet here we are.…

Idea Publisher: My first WordPress plugin

Posted in Development, and Experiences

A few months ago I had an idea, I use a diverse set of social platforms from LinkedIn to Twitter to Minds. However, the latter…